Hi everyone, I am back with another Storage Spaces Direct post. This time I would like to talk to you about planning S2D clusters from 2 node to a 16 node cluster. What you need to think about when doing your planning. This will be a multi part series covering 4 nodes, adding additional nodes. All Flash, Hybrid, MRV and the big one a 16 Node setup.

With S2D and Hyperconverged you get the advantages of having compute and storage in the same nodes. A lot of people out there talk about the problems with balancing the ration between CPU, Memory and Disk in a Hyperconverged scenario. Do you get too much CPU, too much Memory or Disk. Compered to a tradition setup with a Storage solution and a Compute/Mem cluster connected to your storage via Fibre Channel, InfiniBand or iSCSI over Ethernet. The bottleneck has always been the networking for the most part, especially with all flash san’s. Either not enough bandwidth or just latency being too high. But let’s just face it, it’s not even close to S2D in storage performance.

With Storage Spaces Direct as you have direct connection to the storage on each host, and high bandwidth SMB/RDMA connection between the servers on Ethernet. You get speeds like 10, 25, 40, 100 Gbit connections giving a whole new aspect of how to configure your setup, and how your VM’s will work on this solution.

In these configurations I will base my server configurations on the Lenovo WSSD certified nodes. As I will be working closely with Lenovo at my new job.I will look at a few different disk scenarios along with 2-16 node setups. But will start with a 4 node solution

Disk Options we can have is

- Hybrid with NVME + HDD storage

- All Flash

- SSD + HDD

- And as a last scenario NVME + SSD + HDD

Things to consider

When it comes to sizing a new cluster to replace any existing solution it can be hard to size it correctly. How much storage is being used, how much CPU are you using and how much Memory. As over the years hardware do get better, and sizing CPU’s is not always easy. Disk/memory is kind of easy, look at what you have, and try to think what do you need in 3-5 years or so. Now most clusters will have the option of scaling out, either mem, disk or a node. When it comes to CPU, looking at your current workload. If it’s a 3 year old solution running 12 cores, and you are barely hitting 10% cpu, you will most likely be well off with 2 Intel Silver 4110 8 Core CPU’s as that will cover 1 Datacenter license pr server.

First Scenario

For this 4 node scenario the following is in play.

380 Virtual Machines all Windows Servers

2.5 TB Memory

1900 Virtual CPU’s

75 TB storage Usage

Some of the virtual machines are High Available SQL servers that serves some huge SQL servers, that require pretty high disk IO, and each SQL Always on which is 2 clusters with 3 nodes each. And each node has 80GB mem and 1 TB disk. The rest are normal domain servers, app servers and so on that does not require too much CPU, memory or disk. Except for the exchange servers.

In Disk Option 1 above we would not have enough disk with 2 or 4 TB SSD Capacity drives. And would require 8TB SSD Capacity drives which is extremely expensive and we do not need an all flash setup . So I will choose Disk Option 2 with NVME + HDD for this setup. As the NVME will let me have good Write and Read performance. As all writes will go to the NVME, and as it’s HDD for capacity all HOT reads will also go from the NVME. Also the price diffrence between NVME and SSD for the cache is pretty low, compeard to the performance gain you get with NVME cache devices.

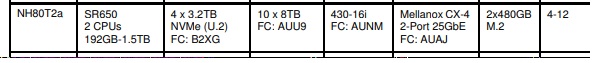

Based on the Lenovo support matrix for WSSD that would let me choose the WSSD Config NH80T2a which is based on the SR650 12bay servers. Now we have the platform, let’s look at what we have for options there.

We have 4 3.2 TB NVME and 10 8TB HDD. Giving use 12.8 TB cache and 80TB storage pr node. With this it gives us 96TB usable storage and a cache ratio on usable storage to about 50%+ as we will have 24TB of usable storage pr node in a 3 way mirror. With 80TB in raw capacity pr node the cache to capacity ratio is about 16%. So this will give is enough disk and 21TB extra space to play with for some further growth.

When it comes to memory the best way to scale memory is to occupy either 6 or 12 memory bank pr CPU to get the best speed between the DIM’s and the CPU. As we are using 2.5 TB of memory using 24 x 32GB memory chips with 4 nodes will only give us about 2.3 TB of usable memory as we will have min 1 node free mem for servicing and if a node goes down. This leaves us with going for 24 x 64GB Dim’s giving us 4.5 TB of usable memory.

With CPU we need to look into how many physical cores does the old cluster have. It is a 6 node with 10 cores, giving us 120 Physical cores. And it’s using about 15% cpu on average, with the nodes being 3+ years old. This gives us about 15.8 virtual cores pr physical core. Now with the new Purley CPU’s we could most likely get off with using the Intel Gold 6144 8 Core 3.5Ghz cpu’s. Using the Gold CPU’s over the Silver is the share performance boost we get from the Gold CPU compared to the cores on the Silver we would need to keep up the performance, and we would most likely need a 10 or 12 core on the Silver CPU’s to get the same performance, thus saving us some Datacenter licenses that we can use on the Gold CPU instead. We should be able to keep the average CPU on each node between 10-20% with this setup. And at 4 Datacenter licenses compared to for instance a 12 core setup that would require an additional 16 2 core Datacenter licenses. With the 6144 we get 64 cores which will give us about 29.7 virtual cores pr physical cores. But these baby’s should be able to handle it with ease.

With memory we will use the recommended Mellanox CX-4 10/25Gbit Nic’s along with 2 Lenovo NE2572 48 10/25gbit port switches. These switches are the same Lenovo uses for their Azure Stack, so they are fully supported on SDN with their CNOS OS wich is pretty similar in the CLI as Cisco or Dell’s new OS.

Now this will give us a pretty well performing cluster, that should crank out about 400-500k IOPS pr node on 4k read Vmfleet. And have enough CPU, Memory and disk to be able to grow a bit. And can be scaled out with 12 more nodes if needed.

Recap for this scenario

CPU’s look at what your average % of cpu is being used. How old they are and how many cores. This should give you an indication of what you need with the new Purley Scalable processors you need. There is no need to overdo the number of cores if you don’t need it. Go with a Gold CPU with higher CPU frequency.

Memory and Disk, choose more then you need as this will be where your growth on a normal setup will be. On the All Flash vs Hybrid, All Flash 8TB drives are about 10-20 times more expensice pr disk, so unless you need it, go NVME + HDD.

Networking, go min 25 Gbit for Hybrid, 25 is the new 10, and the prices are almost identical these days.

Stay Tuned for Part 2 where we will talk about adding nodes to an existing cluster and what you should and not should do.